Netflix Architecture System Design: A Step-by-Step Breakdown

How Netflix architecture powers seamless streaming worldwide

.png&w=3840&q=75)

How Netflix architecture powers seamless streaming worldwide

.png&w=3840&q=75)

.png)

Every time you press play on Netflix, a machine more complex than most government systems springs into action. In the next 2 seconds, before a single frame appears on your screen, Netflix has authenticated your session, checked your regional licensing, identified your device and network speed, selected the nearest edge server from one of 1000+ global locations, generated a personalised manifest of video segments in the exact right codec and resolution for your device, and issued a DRM key to protect the content.

Netflix has 283 million subscribers across 190 countries. At peak hours, it handles tens of millions of simultaneous streams. It delivers the same HD or 4K video to a user with 100 Mbps fibre in South Korea and a user with 5 Mbps mobile data in rural India. The same infrastructure that handles a normal Tuesday night also serves the moments when a major season finale drops and viewership spikes 10x in minutes.

Understanding how Netflix works is a masterclass in distributed systems, microservices, content delivery, data engineering, and resilience engineering. Every major concept in modern backend architecture appears in Netflix’s stack: event-driven design, sharding, CDN, adaptive streaming, recommendation ML, and chaos engineering.

This blog walks through the complete system step by step, from the moment you press play to the first frame appearing.

Netflix System Design refers to the complete architecture of how Netflix stores, processes, distributes, and streams video content to millions of users simultaneously while providing personalised recommendations, instant search, secure access, and zero-downtime reliability.

The system has three primary layers: the Client (your device, which handles adaptive bitrate selection, DRM key management, and buffering), Open Connect (Netflix’s globally distributed CDN sitting inside ISP networks, which handles approximately 95% of all Netflix video traffic), and the AWS Backend (the cloud infrastructure running all non-video operations like authentication, recommendations, search, billing, and video processing).

.png)

When you open Netflix and press play, the request flow splits into two paths. For non-video requests such as login, browsing, search, and recommendations, your device talks to Netflix’s API Gateway (Zuul), which routes requests to the appropriate microservice running on AWS. For video playback, your device talks directly to the nearest Open Connect edge server inside your ISP’s network.

The key architectural principle is that video bytes almost never travel through AWS during normal playback. This is what allows Netflix to serve tens of terabits per second of video traffic without those bytes ever reaching their cloud infrastructure. The backend lives entirely on Amazon Web Services. The middle layer, Open Connect, is Netflix’s private invention that makes the whole thing scale economically.

When you open Netflix and log in, your device sends your email and password to the Netflix Auth Service over HTTPS.

The Auth Service validates your credentials against the Users database and, on success, generates a JWT (JSON Web Token) containing your user ID, device ID, expiry time, and subscription tier. This token is returned to your device and stored locally.

Every subsequent API request includes this JWT, and any microservice can validate it independently without querying a central session database. This makes authentication stateless and scalable across hundreds of services without a bottleneck.

.png)

JWT-based authentication is far more scalable than traditional session-based auth. With sessions, every service would need to query a central session store on every request. With JWT, the token itself contains all the necessary information, cryptographically signed so it cannot be tampered with.

Netflix also enforces concurrent stream limits at the Playback Service level. Standard plan users can stream on two devices simultaneously and Premium users on four. If a third device attempts to start a stream under the Standard plan, the Playback Service rejects the request. Multiple profiles within the same account each maintain separate watch history, preferences, and recommendation profiles.

.png)

Netflix runs on hundreds of independent microservices rather than a single large application. Each service is responsible for one business capability, owns its own database, and communicates with other services through APIs or events.

The key services include the AuthService for login and session management, UserProfileService for watch history and preferences, RecommendationService for personalised content suggestions, SearchService for the search bar, PlaybackService for managing video manifests and DRM, BillingService for payments and subscriptions, and AnalyticsService for event ingestion and metrics.

Services communicate in two ways. Synchronous communication using REST or gRPC is used when an immediate response is required, such as the PlaybackService calling the ContentService to fetch a video manifest.

Asynchronous communication through Apache Kafka is used for events that do not require immediate handling, such as publishing a “user watched 80% of a show” event that the RecommendationService, AnalyticsService, and UserProfileService each consume independently at their own pace.

All external client requests enter through Netflix’s Zuul API Gateway, which handles routing, authentication validation, rate limiting, and logging. From the client’s perspective, there is one endpoint.

Zuul decides which microservice handles each request. Netflix also built Eureka for service discovery, since services run on dynamic cloud instances where IP addresses change constantly and cannot be hardcoded. Every service registers itself with Eureka on startup, and other services ask Eureka for the current address whenever they need to communicate.

For fault isolation, Netflix uses Hystrix as a circuit breaker. In a distributed system, a slow service can cascade failures across the entire platform. If the RecommendationService starts taking 10 seconds to respond, all PlaybackService threads might queue up waiting for it, eventually making playback unresponsive too.

Hystrix detects when a service is failing and trips the circuit, causing subsequent calls to return immediately with a cached fallback rather than waiting. After a recovery timeout, Hystrix tests whether the service has recovered before restoring full traffic.

.png)

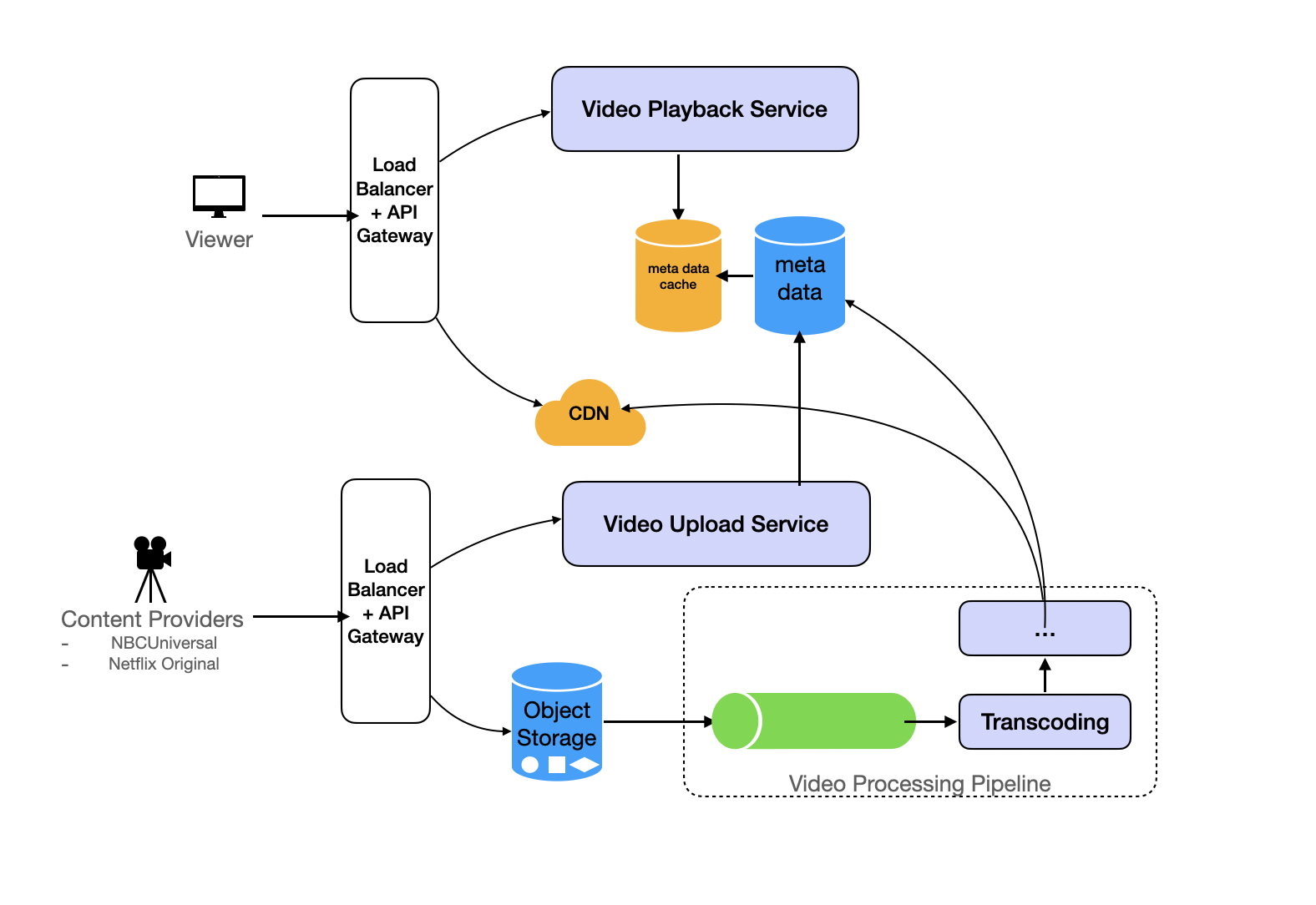

Before a single user can watch a Netflix title, the raw video file goes through an intensive processing pipeline. A studio delivers a raw video file, which for a two-hour 8K production can easily be 4TB in size. This file is uploaded to AWS S3 as the master origin.

The first stage is automated validation and quality checks covering codec compatibility, audio sync, and subtitle files, followed by ML-based content moderation. After validation, the video is split into small chunks using scene-based chunking rather than time-based chunking, meaning each chunk corresponds to a complete scene.

This produces a better streaming experience because when the client requests a chunk, it receives a complete scene rather than a fragment of one.

The chunked segments then enter the transcoding stage, which runs on parallel AWS EC2 workers. Each chunk is independently converted into multiple codecs including H.264, HEVC, and AV1, and multiple resolutions including 240p, 480p, 720p, 1080p, 1080p HDR, 4K, and 4K HDR. By processing chunks in parallel, Netflix can transcode an entire movie in a fraction of the time sequential processing would require.

After transcoding, segments are packaged into HLS and MPEG-DASH streaming formats with corresponding manifest files. Segments are then encrypted using Widevine for Android and Chrome, FairPlay for Apple devices, and PlayReady for Microsoft platforms. Finally, the finished segments are distributed to Open Connect appliances worldwide. For anticipated popular releases, Netflix pre-positions content on edge servers before the release date so the video is already waiting when users press play.

The result is that Netflix creates approximately 1100 to 1200 different encoded file versions of every single title in its library.

When you press play, Netflix does not send you one video file at one fixed quality level. Instead, it sends a continuous stream of small 2 to 6 second segments and dynamically switches quality based on your real-time network conditions.

The process begins with the client requesting a manifest file from the Playback Service. The manifest lists every available quality variant from 240p at 300 kbps up to 4K at 16 Mbps. The client starts conservatively by requesting the first segment at a moderate quality, then measures how fast that segment arrived. If it downloaded much faster than expected, the client steps up to higher quality for the next segment. If it arrived more slowly than expected, it steps down to preserve smooth playback.

The client always tries to maintain a pre-buffered cushion of 6 to 10 segments ahead of the current playback position. This buffer absorbs brief network drops without causing visible pausing. The quality switching is seamless and automatic, driven entirely by the client player. The server’s only role is to have every quality variant available. On a fast connection, you see 4K. On mobile data, you see 480p. The transition between qualities happens invisibly mid-playback.

Open Connect is the most distinctive and economically critical part of Netflix’s architecture. Rather than paying commercial CDN providers, Netflix built its own global content delivery network and places Open Connect Appliances directly inside partner ISP networks. Netflix currently has over 1000 OCA locations worldwide inside carriers like Comcast, AT&T, Jio, BT, and Deutsche Telekom.

.png)

When you stream a video, your request goes to the Open Connect server sitting inside your ISP’s own infrastructure, often in the same data centre as the equipment that connects your neighbourhood to the internet. The video never crosses the public internet. This is why Netflix can stream with low latency regardless of where AWS servers are physically located.

Netflix’s recommendation system processes 35 billion user interaction events per day to produce each user’s personalised homepage. The signals used include what you watched and how much of it you completed, what you abandoned early, your ratings, what you hovered over while browsing without clicking, what time of day you watch, which device you use, and what other users with similar viewing histories have been watching.

The core recommendation system uses two main approaches. Collaborative filtering finds users with similar viewing profiles and recommends titles they enjoyed that you have not yet seen. Content-based filtering matches the metadata attributes of titles such as genre, themes, cast, and production style to your demonstrated preference profile. Netflix blends both approaches along with dozens of additional signals into a hybrid model.

Heavy ML model training runs in offline batch mode using Apache Spark clusters on a nightly or hourly cycle. These jobs train models on billions of data points and produce embedding vectors, which are numerical representations of each user’s preferences and each title’s characteristics. The predicted affinity between a user and a title comes from the mathematical relationship between their respective vectors.

Netflix runs approximately 150 Elasticsearch clusters across about 3500 nodes to power its search functionality. The search index contains title names in all languages and alternate titles, cast and crew names, genres, keywords, mood tags, synopsis text, release year, content rating, and runtime.

When a user types a query, the system performs prefix and fuzzy matching simultaneously, enabling autocomplete suggestions to appear while typing. Fuzzy matching corrects misspellings, so typing “Hary Poter” correctly returns Harry Potter results. Search results are personalised by blending relevance signals with the user’s preference profile. A user who primarily watches Korean action films will see different results for the same query “action” than a user who primarily watches Hollywood blockbusters.

Netflix uses different databases for different types of data, choosing each one to match the specific characteristics of what it stores.

MySQL handles all ACID-critical data including billing information, subscription plans, user accounts, and transaction records. These operations require strong consistency and atomic transactions. Netflix runs MySQL in a master-master replication setup with read replicas in each AWS region and uses Route53 for DNS-based failover if a primary node fails.

Apache Cassandra handles viewing history and user interaction events. The write-to-read ratio for this data is approximately 9 to 1, and the system needs to absorb over 250,000 writes per second at peak with billions of events flowing in daily. Cassandra scales horizontally across hundreds of nodes to handle this volume. Netflix runs over 50 Cassandra clusters across more than 500 nodes, with the largest individual cluster containing 72 nodes.

Amazon S3 stores all transcoded video segments, covering all 1100-plus variants of every title, providing eleven nines of durability with storage that scales to accommodate petabytes of content. Amazon DynamoDB handles content catalogue metadata with low-latency key-value access that scales automatically.

EVCache, Netflix’s distributed Memcached-based caching layer, sits in front of all databases and serves over 95% of read traffic from cache. By serving personalised homepage rows, recommendation scores, and frequently accessed metadata from cache, the underlying databases only need to handle the remaining few percent of requests, making sub-100 millisecond page loads feasible at 250 million subscriber scale.

.png)

EVCache is Netflix’s custom distributed caching system built on top of Memcached, designed to protect downstream databases from the millions of reads per second that would otherwise overwhelm them when tens of millions of users are simultaneously browsing and streaming.

The architecture deploys multiple clusters per AWS region, with each cluster containing many Memcached nodes. Data is sharded across nodes within each cluster and replicated across clusters within the same availability zone. Read operations go to the nearest cluster in the local zone for minimum latency, while write operations broadcast updates to all clusters simultaneously to ensure consistency.

Netflix monitors its systems through Atlas, a custom real-time telemetry platform handling billions of metrics per minute, alongside CloudWatch for AWS infrastructure metrics and centralised Elasticsearch-based logging across all microservices. When a customer cannot stream, support engineers can search all event logs for that specific user across every microservice simultaneously to diagnose the root cause within seconds. Spinnaker, Netflix’s open-source continuous deployment pipeline, handles gradual code rollouts from 1% of traffic up to 100%, with automatic rollback if error rates spike after a deployment.

The most distinctive aspect of Netflix’s reliability engineering is Chaos Engineering: deliberately introducing failures into the production environment to find weaknesses before they cause real outages. Netflix pioneered this approach and built a suite of tools to implement it systematically.

Chaos Monkey randomly kills EC2 instances during business hours, verifying that the system recovers automatically without human intervention. Chaos Kong simulates an entire AWS region going offline, testing whether cross-region failover and data replication work correctly under real conditions. Latency Monkey introduces artificial network delays between services to verify that every service has proper timeouts and degrades gracefully when its dependencies become slow. Conformity Monkey scans for services that do not follow architectural best practices and flags them for correction.

The philosophy is straightforward: if you never test failure in production, failure always surprises you. By regularly injecting controlled failures when engineers are watching dashboards, Netflix ensures every service is built to expect and handle failures. When real infrastructure failures occur, the automatic recovery mechanisms have already been proven to work.

These are order-of-magnitude estimates for a Netflix-scale system that are useful to know for system design interviews:

Metric | Estimate | Notes |

|---|---|---|

Daily Active Users (DAU) | 250 million | |

Peak concurrent streams | 15 to 20 million | ~5 to 10% of DAU |

Average stream bitrate | ~3 Mbps | ABR varies from 0.3 to 16 Mbps |

Peak video egress at CDN | ~45 to 73 Tbps | All Open Connect edge |

Origin traffic (with CDN) | ~0.9 to 1.5 Tbps | After 98% cache hit rate |

Play starts per day | 500 million | ~5,800 QPS average |

API calls during peak hour | ~750 million | ~1.25 million RPS burst |

Events ingested per day | ~75 billion | User interactions |

Raw event data per day | ~37.5 TB | Before compression |

Video storage | Petabytes per title | 1100+ variants per title |

A quick mental model: 15 million concurrent streams at 3 Mbps equals 45 Tbps of edge egress. A 98% cache hit rate on Open Connect reduces that to about 0.9 Tbps hitting AWS origin. 500 million play starts per day translates to roughly 5,800 average queries per second with bursts reaching 20 to 30 thousand. The control plane API with aggressive caching needs to handle over one million requests per second.

Component | Technology | Purpose |

|---|---|---|

Frontend | React, JavaScript | Web UI |

API Gateway | Zuul (Netflix OSS) | Routing, auth, rate limiting |

Service Discovery | Eureka (Netflix OSS) | Service location |

Fault Tolerance | Hystrix (Netflix OSS) | Circuit breaker |

Backend Services | Java, Kotlin | Microservices |

Recommendation ML | Python, TensorFlow, Apache Spark | Model training and inference |

Search | Elasticsearch | Full-text search |

Event Streaming | Apache Kafka | Real-time event pipeline |

Batch Processing | Apache Spark | ML training, analytics |

Caching | EVCache (Memcached) | Distributed cache |

RDBMS | MySQL | Billing, accounts, transactions |

NoSQL | Apache Cassandra | Watch history, events |

Video Storage | Amazon S3 | Encoded video segments |

Compute | AWS EC2 | Application servers |

ML Platform | Amazon SageMaker | Model training and serving |

CDN | Open Connect (custom) | Video delivery |

Video Protocols | HLS, MPEG-DASH | Adaptive streaming |

Transcoding | FFmpeg and custom tools | Video encoding |

DRM | Widevine, FairPlay, PlayReady | Content protection |

Container Orchestration | Docker, Kubernetes | Deployment management |

Monitoring | Atlas, CloudWatch | Metrics and alerting |

CI/CD | Spinnaker | Deployment pipeline |

Extreme scalability through independent microservices: Each service scales based on its own load without affecting others. During a major series premiere, only the Playback and Recommendation services need extra capacity while Billing and Auth stay at normal scale. This targeted scaling is far more cost-efficient than scaling a monolith where every component must scale together regardless of individual demand

Open Connect eliminates 95% of egress costs: By placing video servers inside ISP networks, Netflix bypasses public internet transit for the vast majority of video traffic. With a 98% cache hit rate, only about 2% of video traffic ever reaches AWS. The bandwidth cost savings justify the entire Open Connect infrastructure investment many times over compared to routing the same traffic through commercial CDN providers

Resilience through Chaos Engineering: Deliberately injecting failures in production environments during business hours ensures every service has proven automatic recovery mechanisms. When real failures occur, the system has already been tested against those failure modes. Services that cannot handle failures are discovered and fixed during controlled experiments rather than during actual customer-affecting outages

Personalisation at scale through offline-online ML architecture: Heavy ML model training runs in offline batch mode using Spark clusters on a nightly cycle, while online inference reads pre-computed results from EVCache in under 100 milliseconds. This delivers sophisticated personalisation built on billions of data points while meeting strict latency budgets for homepage load times

Enormous operational complexity: Running hundreds of microservices, managing thousands of Cassandra and Elasticsearch nodes, and maintaining a private global CDN requires a world-class engineering organisation. This level of complexity is completely inappropriate for smaller organisations and represents years of accumulated investment that cannot be replicated quickly or inexpensively

Distributed systems debugging is extremely hard: When a user cannot stream a show, the root cause might be in any of dozens of components. Tracing issues across hundreds of services processing billions of daily events requires sophisticated tooling and deep expertise that takes years to develop

Cold start problem for new users and new content: New users receive generic recommendations that may not match their tastes, potentially causing early churn. New content has no viewing data to drive accurate targeting for days or weeks after release. Both scenarios require additional engineering investment in fallback algorithms to mitigate

High cost and investment that most organisations cannot justify: Open Connect required partnerships with thousands of ISPs, years of infrastructure investment, and ongoing maintenance. These solutions are justified at Netflix’s scale but represent massive over-engineering for any organisation with fewer than tens of millions of users

Netflix’s system design solves every major distributed systems challenge simultaneously in production at massive scale. Here is the full recap:

The system operates across three layers: the client player handling adaptive bitrate selection, the Open Connect CDN serving 95% of video traffic from inside ISP networks, and the AWS backend managing all non-video operations through hundreds of independent microservices.

Authentication uses stateless JWT tokens validated by any service without a central session store. Microservices communicate through Zuul for routing, Eureka for service discovery, Hystrix for circuit breaking, and Kafka for asynchronous events.

The video processing pipeline converts each title into over 1000 encoded variants using scene-based chunking, parallel transcoding, DRM encryption, and pre-distribution to Open Connect servers worldwide.

Adaptive bitrate streaming dynamically selects the optimal quality segment every few seconds based on real-time network measurement. Open Connect’s 98% cache hit rate reduces AWS origin traffic to just 2% of total egress, making global scale economically feasible.

The recommendation engine combines collaborative and content-based filtering, training ML models on 35 billion daily events and serving results from EVCache in under 100 milliseconds. Databases are matched to their data types: MySQL for ACID-critical billing, Cassandra for high-throughput events at 250,000+ writes per second, and EVCache absorbing over 95% of all read traffic. Chaos Engineering proactively tests the system against real failure scenarios, enabling the near-99.99% uptime Netflix achieves globally.

FAQ